A certification requirement that revealed a deeper product gap

Pipefy was pursuing Gartner BOAT (Business Observability & Analytics Tools) certification — a strategic differentiator that would validate the platform's analytical capabilities against industry standards. Process Intelligence wasn't just a feature request; it was a certification requirement and a business imperative.

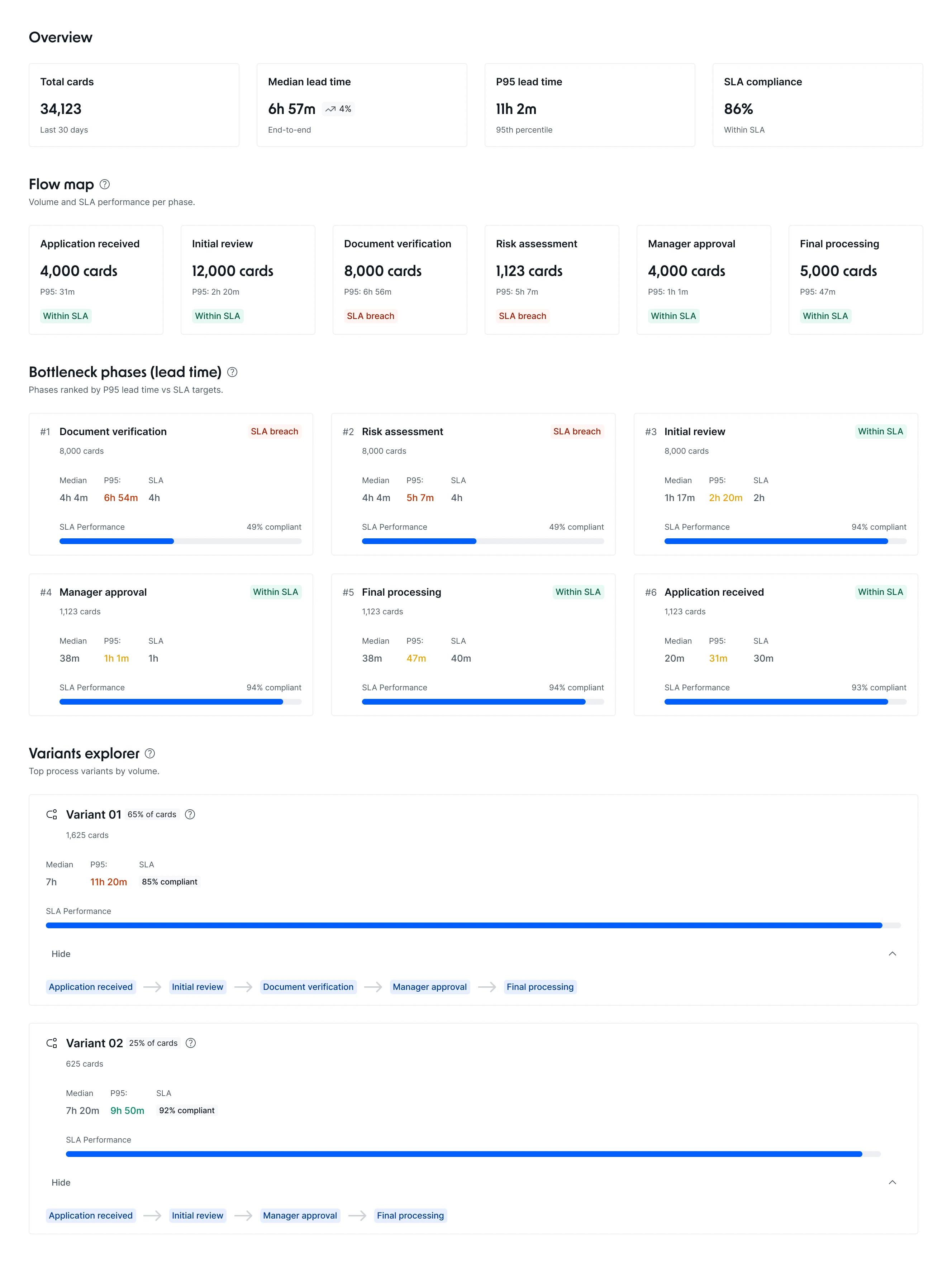

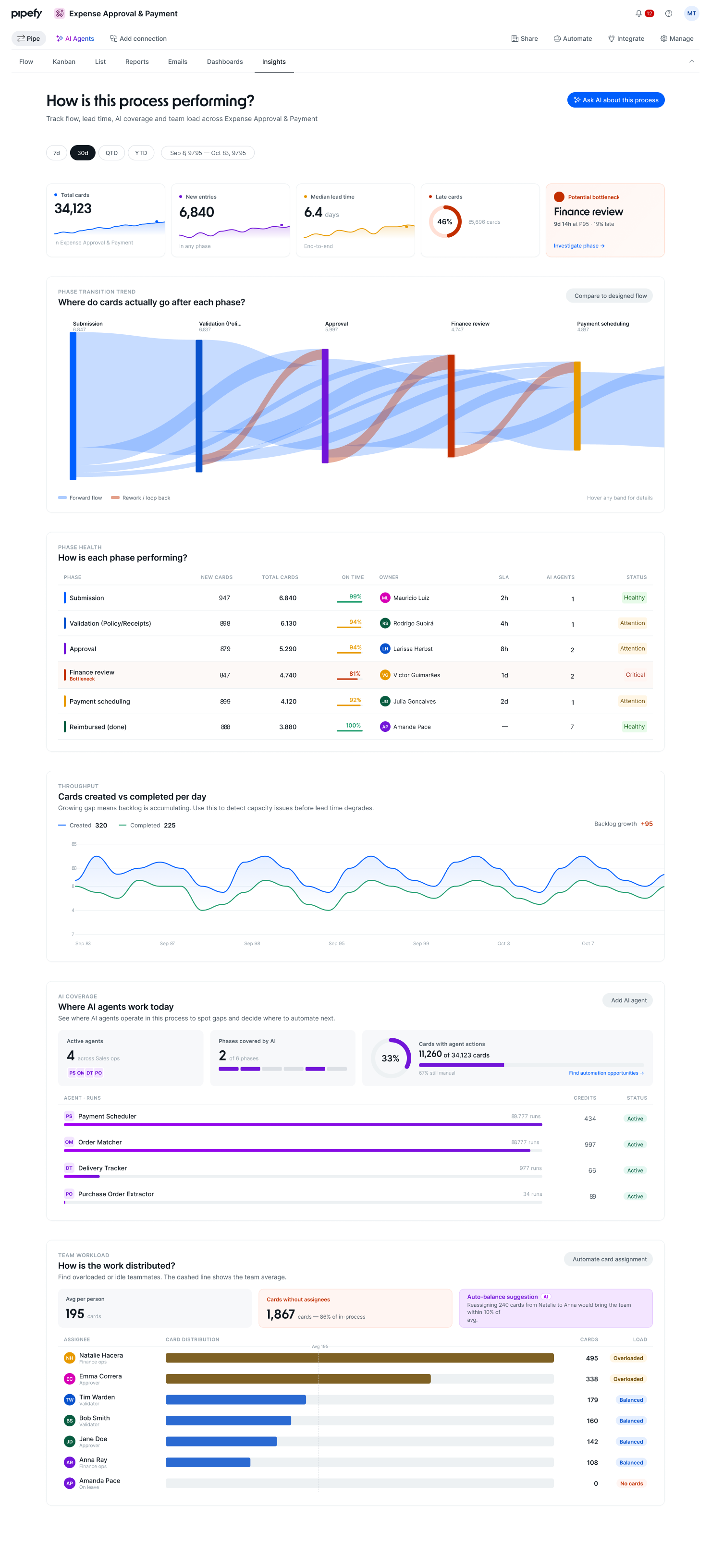

But when we talked to users, we found something more fundamental: existing dashboards were fragmented, static, and required heavy manual interpretation. Customers were exporting data to external analytics tools because Pipefy couldn't answer the questions they actually had:

01

"Where are my bottlenecks?"

No clear view of where cards stalled or accumulated across phases.

02

"How does my process vary?"

No visibility into how processes actually behaved in the real world versus the designed flow.

03

"Which phases cause delays?"

Duration data existed but couldn't be linked to systemic patterns or process decisions.

04

"How do I prove ROI?"

No way to measure efficiency gains or justify automation investments with data.